TL;DR: Designing microservices is always a trade-off between data consistency and service availability. This post shows how data replication and asynchronous communication can enhance service isolation to improve a system’s robustness.

Developing complex distributed systems can be challenging: Logic is spread around different services, each not seldom designed and developed by separate teams working remotely - yet many of these services have to communicate with each other.

Microservices, as an architectural paradigm, streamline the development and deployment of complex systems by emphasizing a crucial characteristic: Service Isolation. This isolation entails each microservice acting as an autonomous unit, encapsulating a distinct part of the business logic. In line with domain-driven design principles, these services also define their own bounded context1, enabling individual development, updates, and maintenance without impacting the wider system.

However, sometimes, our business cases are too complex to be handled by a single service alone. For solving these more sophisticated use cases, several services must interact and exchange data. Communication then often happens through direct API calls between services, e.g., using synchronous protocols like HTTPS or gRPC.

This typical interaction pattern creates a strong coupling by making our services dependent. While generally manageable, these dependencies pose a risk: a network error in a low-level service could easily disrupt substantial portions of our system. Moreover, updating interconnected components becomes tough, potentially causing prolonged maintenance downtime due to the ripple effect of a single update across multiple nodes.

This post will investigate how we could isolate our microservices better and make them more robust against network failures by using asynchronous communication and data redundancy. However, we will also see that improving our system’s availability comes with the cost of having temporary data inconsistencies.

Our Example System: A Typical Online Shop

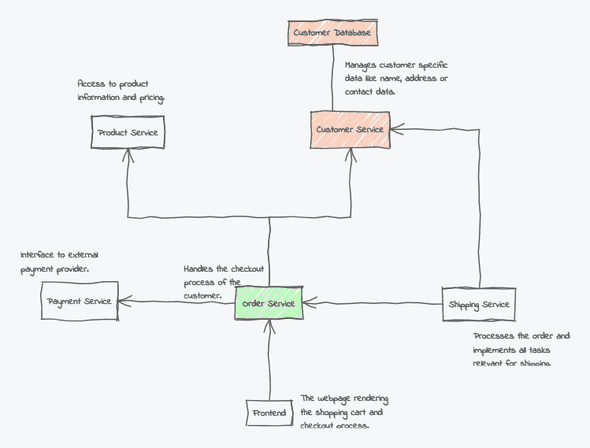

As an example system, we will use a small microservice architecture representing a typical online shop backend (and parts of the frontend).

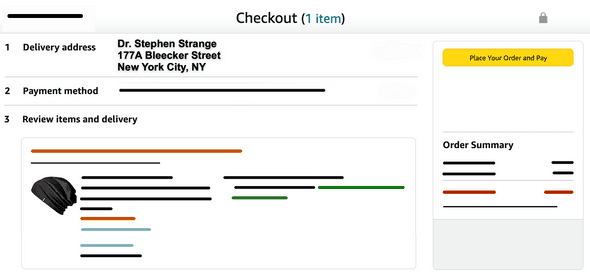

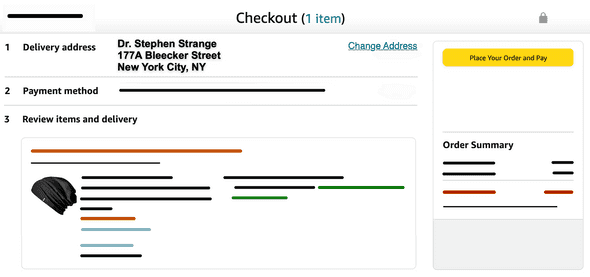

With our shop, customers can select and order different products. Our focus for today lies only on the checkout process, where customers are presented with a list of their chosen products, their address, and payment method and can then proceed with the actual purchase.

The Microservice Architecture

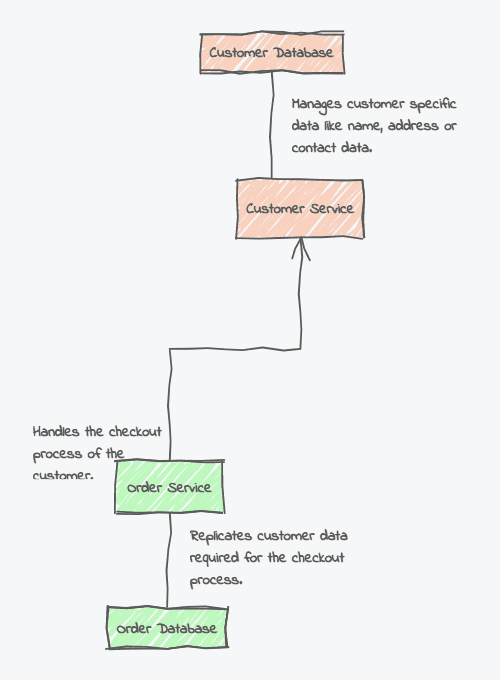

Each part of our domain is implemented through a separate service. For instance, the Customer Service is responsible for storing and managing all customer-relevant master data, like their names, addresses, payment methods, or contact information. Our system uses a shared-nothing approach, so each service manages its own database others cannot access directly.

The second service we will focus on is the Order Service, which implements the whole checkout process, also presenting an overview of the customer address, the payment method, and the chosen products to the user. It requests this information by calling other services directly.

Processes like shipping, payment, etc., are also extracted into separate services. However, for now we will focus mainly on the Customer and Order service.

Of course, this is just a very minimalistic example architecture. Real-world systems are often much more complex. But for our use case, let’s assume this architecture is sufficient.

Managing the Checkout Process

While our Order Service encapsulates most of the logic required for the checkout process, it still needs to collect data like the shipping address or the payment method by calling the Customer Service directly through a synchronous connection.

While this communication creates a strong coupling between both services, it guarantees that the address and payment data we present are always up-to-date since the Customer Service will always provide us with the most recent version of the records stored in its database.

When the network functions within standard parameters, this solution works well and offers seamless communication. However, network unpredictability, caused by issues like misconfigurations, sudden surges in requests, or potential DDoS attacks, can render servers unreachable.

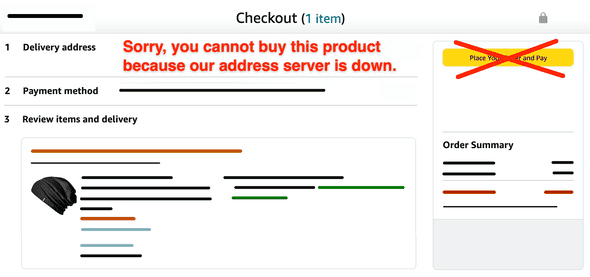

What makes it worse is that the strong coupling we implemented between our services makes our systems even more vulnerable to such network failures:

Imagine the user has chosen some products and proceeds to the checkout page, where the Order Service tries to receive the customer’s address from the Customer Service, which, unfortunately, became unresponsive due to a network issue. Instead of showing the correct information, we would rather have to print an error message to the user:

That’s really daunting: We used microservices in the first place to make our services more independent and fortify our system against failures, and now, a single service breakdown halts our core business functions. This situation is particularly troubling as it would cost us real money: The customer’s purchase was disrupted, potentially leading them to seek the product elsewhere.

Undoubtedly, the strong interdependence between our services is the root cause of this error. Still, we have to exchange data somehow, so coupling can’t be avoided - or is there a better way to transfer information while keeping the coupling to a minimum?

As you may have guessed, there is a different approach. But before going into details, we must first dive a bit deeper into distributed systems and their properties - welcome to the CAP theorem!

The CAP Theorem - Trade-Offs in Distributed Systems

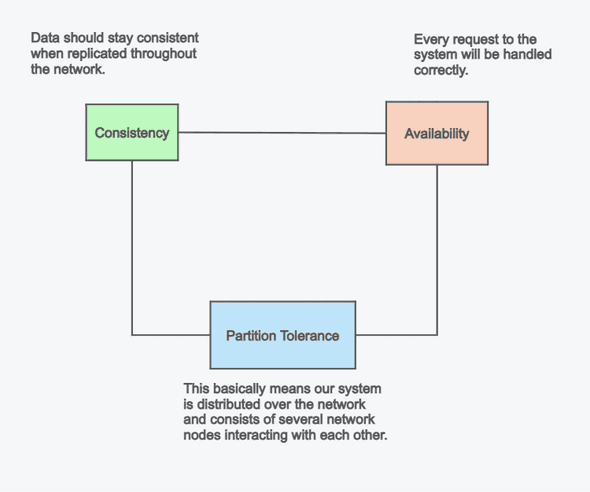

The CAP theorem, first postulated by Eric Brewer2, is a rule that applies to distributed computer systems. It states that within such a system, it’s impossible to guarantee availability and data consistency simultaneously. In case of a network error, one always has to choose between one of the two.

If we look at our example architecture from above, we decided implicitly for consistency: Our customer data is stored at a single location, and all access goes through the Customer Service. In that way, we guarantee that customer-related information is always up-to-date. However, according to the CAP theorem, by focusing on consistency, we now can no longer guarantee availability when a network error occurs. If we cannot reach the Customer Service anymore, our checkout process can’t continue. The customer data is still consistent - we just cannot reach it!

So, was it a good choice to go towards consistency and against availability? Well, it depends… While in many cases, consistency is essential, there are often scenarios where availability may be preferable for the price of having potentially inconsistent data.

Let’s see how we would have to change our system to favor availability and what the implications would be.

Redesigning our System towards Availability

While our initial design intended to use one service per bounded context, some of our services were more built around entities than actual use cases. Take, for instance, our Customer Service: Its primary purpose is to manage customer entities, and as such, it’s mainly only a gateway to the underlying database. Services like these are also considered entity services3, and can be problematic as they enforce other services to create strong couplings with them.

Unfortunately, this coupling also drives our architecture towards Consistency and away from Availability. So let’s see how we could untie our connections a bit.

Step 1: Replicating Data

Our Order Service is obviously not autonomous enough, requiring additional information from other services to complete the checkout task. To address this, we will establish a dedicated database for the Order Service where all relevant customer data is replicated.

By doing so, the Order Service will be self-sufficient without relying on external providers. This setup guarantees that even if the Customer Service is inaccessible, our Order Service will remain operational.

But wait, does that mean that we have to store everything twice? And by replicating the data, are we not violating the DRY4 principle? Well, that’s the price we have to pay for better availability. There is no free lunch, not even when developing complex distributed systems. But note that both services do not necessarily have to store the same view of the data. While the Customer Service may keep a more detailed version, like a primary or secondary address or even a complete history, the Order Service only needs the most rudimentary information to complete the checkout.

While our change makes the service self-containded, it has a drawback:

Since we now store our data at two locations, how do we keep both databases in sync, e.g., when a customer updates their address?

Well, to solve this, we have to rethink how our service communication works…

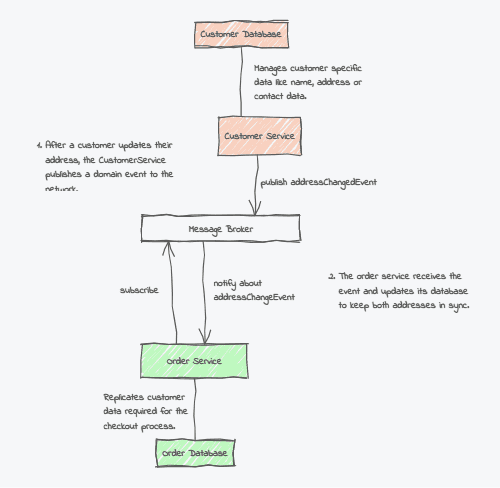

Step 2: Using Asynchronous Communication

While we could reduce the interconnection between our two services by using a dedicated database for each, we still need some kind of connection to initially transfer the data and keep it in sync later. Our current communication model uses direct synchronous API calls. Again, this creates a strong dependency because the caller needs to know the exact details - like the endpoint address - of the API it wants to call.

We can reduce the coupling here by switching from the technical level towards a more domain-driven thinking: A user changing their address is an essential action in our system. Hence, we should also consider it a domain event that needs to be propagated, and services interested in it should get notified about the change and update their state accordingly,

This is a good use case for applying the asynchronous Publisher-Subscriber pattern: All services are connected to a message broker. In case of an address change, the Consumer Service publishes a customerAddressChanged event, and other participants, like the OrderService, can subscribe to this type of event and get notified when it gets distributed. Upon arrival, they update their database record, ensuring all services have the same consistent dataset again.

The result is not so bad: In case of a (partial) network failure, the user can continue the checkout process, and the data we operate on should remain up-to-date.

That’s definitely an improvement to our initial design. Still, we’re not done yet. There is one crucial edge case we have to take care of.

Step 3: Handling Data Inconsistencies

By improving our system’s availability, we unfortunately reduced its consistency. Think, for instance, what would happens if our message broker goes down due to a network error, or, for whatever reason, a recent address change is not propagated correctly to other services?

In that case, the Order Service would show an outdated address during checkout. While the service is still reachable, we can not guarantee that its data is consistent with the rest of the system.

Luckily, we have an easy workaround: We can add a Change Address button next to the address field, allowing the user to update the information manually if necessary.

Sure, this may be annoying for the user - “I just updated the address in my profile; why does this not show up here?” - but it’s still better than canceling the whole checkout process due to an unreachable service.

Of course, before the shipping starts, we somehow have to bring our different addresses in sync again, but this can happen long after the checkout is completed (that’s why we speak from eventual consistency5 here - there will be a point in time when our data is synchronized again, although this may not happen immediately).

Conclusion

Developing complex distributed systems, especially within microservices, presents a balance between autonomy and interdependence. While synchronous communication ensures data consistency, it creates a strong coupling between services and amplifies vulnerability to network failures.

Data replication and asynchronous communication can help isolate services and strengthen system resilience but may introduce occasional data inconsistencies. Which approach to choose highly depends on the use case. In our concrete example, there is a clear benefit of being available over being consistent since potential inconsistencies can be corrected manually if necessary. Also, since a customer’s address may not change that often, chances that updates are not arriving due to network downtime may be less likely.

But there are also cases where availability may be more critical: Showing the total order sum requires us to have accurate pricing information at any time. This is a scenario where eventual consistency would not be sufficient enough. The same is true for validating the payment. We must ensure that the payment information our system processes is correct when finishing the order. Otherwise, we cannot continue with the shipping.

In essence, the optimal choice between consistency and availability always hinges on the specific demands of the concrete domain and business case.

Title image © stock.adobe.com.

- Some more details about the term bounded context: Bounded Context - Martin Fowler↩

- A good description of the CAP theorem can be found here: What is the CAP theorem?↩

- Entity Services are often considered as an anti-pattern. See, for instance, a discussion about them here: Entity Services is an Antipattern↩

- Don’t Repeat Yourself: DRY Software Design Principle↩

- This blog contains a good description of what is meant by Eventual Consistency: Think Microservices↩